It was a beautiful Sydney winter’s day to watch the Swans play Carlton in the last round of the regular season on Saturday. This match was my first big data collection, and from here I’ll begin processing audio and video data of the crowd, combined with some broader contextual information I’ll be gathering.

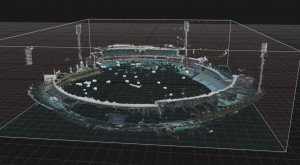

One key thing I need to do is establish a classification system for crowd activity – not only volume and intensity, but also sentiment and location. One thing you notice at the AFL specifically is how localized crowd noise can be, due to the enormous distance between one end of the oval and the other. The term ‘crowd’ tends to simplify and homogenize what is, in fact, a very diverse and dynamic system of individuals. It can be quite difficult to see what’s happening at the opposite end of the field, and the players only directly interact with the crowd towards the boundary. Using player GPS data and location specific audio recordings should provide a spatial account of this interaction.